Fans fight back as ‘disgusting’ Taylor Swift deepfake images shared online

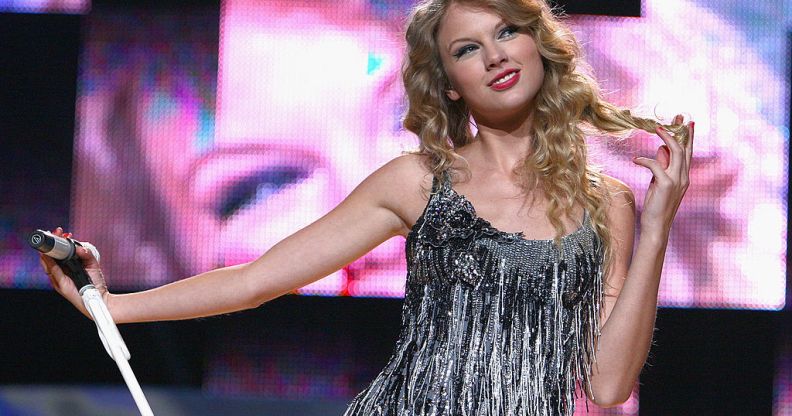

Taylor Swift performs at Madison Square Garden on August 27, 2009 in New York City. (Theo Wargo/WireImage for New York Post)

A recent Taylor Swift AI controversy has brought new focus on the issue of ‘deepfake’ explicit photos of women, with pornographic images of the singer circulating widely on Twitter/X this week.

Th platform has rules against graphic media, but despite that the Taylor Swift AI photos – which showed the music star in a variety of sexually suggestive and explicit positions – remained on X for around 19 hours.

In that time, it’s believed that the explicit ‘photos’ of Taylor Swift amassed more than 27 million views. The account that originally shared the images was eventually suspended.

The most viewed and shared deepfakes of Swift portrayed her nude in a football stadium. The singer has faced a barrage of misogynistic attacks since she started to date Kansas City Chiefs player Travis Kelce – attending NFL games to support her partner. Discussing the backlash, Swift said: “I have no awareness of it if I’m being shown too much and pissing off a few dads, Brads, and Chads.”

Taylor Swift’s fans have since been flooding the platform with tweets that read: “Protect Taylor Swift”, presumably to make it harder to find any remaining versions of the images that might be circulating.

The graphic images of Taylor Swift were also circulated on other social media platforms and message boards including 4Chan, Reddit and Facebook.

A spokesperson for Meta, Facebook’s parent company, told the Independent: “This content violates our policies and we’re removing it from our platforms and taking action against accounts that posted it.

What are deepfakes?

Deepfakes are, essentially, synthetic media, typically created using artificial intelligence (AI) and deep learning techniques to create or manipulate content, such as videos, images, or audio, in a way that appears authentic but is actually fabricated or altered. The term “deepfake” is a combination of “deep learning” and “fake.”

Researchers are increasingly worried that deepfakes empower users to create nonconsensual nude images – as in the case of the Taylor Swift AI controversy – as well as embarrassing portrayals of political candidates. AI generated fake President Biden robocalls during the New Hampshire primary, and Taylor Swift appeared in recent deepfake ads for cookware.

“It’s always been a dark undercurrent of the internet, nonconsensual pornography of various sorts,” Oren Etzioni, a computer science professor at the University of Washington who works on deepfake detection, told The New York Times. “Now it’s a new strain of it that’s particularly noxious.”